anthropic

The anthropic provider supports all APIs that use the same interface for the /v1/messages endpoint.

Example:

BAML-specific request options

These unique parameters (aka options) modify the API request sent to the provider.

You can use this to modify the headers and base_url for example.

Will be passed as a bearer token. Default: env.ANTHROPIC_API_KEY

Authorization: Bearer $api_key

The base URL for the API. Default: https://api.anthropic.com

Additional headers to send with the request.

Unless specified with a different value, we inject in the following headers:

Example:

The role to use if the role is not in the allowed_roles. Default: "user" usually, but some models like OpenAI’s gpt-5 will use "system"

Picked the first role in allowed_roles if not “user”, otherwise “user”.

Which roles should we forward to the API? Default: ["system", "user", "assistant"] usually, but some models like OpenAI’s o1-mini will use ["user", "assistant"]

When building prompts, any role not in this list will be set to the default_role.

A mapping to transform role names before sending to the API. Default: {} (no remapping)

For google-ai provider, the default is: { "assistant": "model" }

This allows you to use standard role names in your prompts (like “user”, “assistant”, “system”) but send different role names to the API. The remapping happens after role validation and default role assignment.

Example:

With this configuration, {{ _.role("user") }} in your prompt will result in a message with role “human” being sent to the API.

Which role metadata should we forward to the API? Default: []

For example you can set this to ["cache_control"] to forward the cache policy to the API.

If you do not set allowed_role_metadata, we will not forward any role metadata to the API even if it is set in the prompt.

Then in your prompt you can use something like:

You can use the playground to see the raw curl request to see what is being sent to the API.

Whether the internal LLM client should use the streaming API. Default: true

Then in your prompt you can use something like:

Which finish reasons are allowed? Default: null

Will raise a BamlClientFinishReasonError if the finish reason is not in the allow list. See Exceptions for more details.

Note, only one of finish_reason_allow_list or finish_reason_deny_list can be set.

For example you can set this to ["stop"] to only allow the stop finish reason, all other finish reasons (e.g. length) will treated as failures that PREVENT fallbacks and retries (similar to parsing errors).

Then in your code you can use something like:

Which finish reasons are denied? Default: null

Will raise a BamlClientFinishReasonError if the finish reason is in the deny list. See Exceptions for more details.

Note, only one of finish_reason_allow_list or finish_reason_deny_list can be set.

For example you can set this to ["length"] to stop the function from continuing if the finish reason is length. (e.g. LLM was cut off because it was too long).

Then in your code you can use something like:

media_url_handler

Controls how media URLs are processed before sending to the provider. This allows you to override the default behavior for handling images, audio, PDFs, and videos.

Options

Each media type can be configured with one of these modes:

send_base64- Always download URLs and convert to base64 data URIssend_url- Pass URLs through unchanged to the providersend_url_add_mime_type- Ensure MIME type is present (may require downloading to detect)send_base64_unless_google_url- Only process non-gs:// URLs (keep Google Cloud Storage URLs as-is)

Provider Defaults

If not specified, each provider uses these defaults:

When to Use

- Use

send_base64when your provider doesn’t support external URLs and you need to embed media content - Use

send_urlwhen your provider handles URL fetching and you want to avoid the overhead of base64 conversion - Use

send_url_add_mime_typewhen your provider requires MIME type information (e.g., Vertex AI) - Use

send_base64_unless_google_urlwhen working with Google Cloud Storage and want to preserve gs:// URLs

URL fetching happens at request time and may add latency. Consider caching or pre-converting frequently used media when using send_base64 mode.

Anthropic’s default behavior is to convert PDFs to base64 (send_base64) while keeping other media types as URLs (send_url). This is because Anthropic’s API requires PDFs to be base64-encoded.

Provider request parameters

These are other parameters that are passed through to the provider, without modification by BAML. For example if the request has a temperature field, you can define it in the client here so every call has that set.

Consult the specific provider’s documentation for more information.

BAML will auto construct this field for you from the prompt, if necessary.

Only the first system message will be used, all subsequent ones will be cast to the assistant role.

BAML will auto construct this field for you from the prompt

BAML will auto construct this field for you based on how you call the client in your code

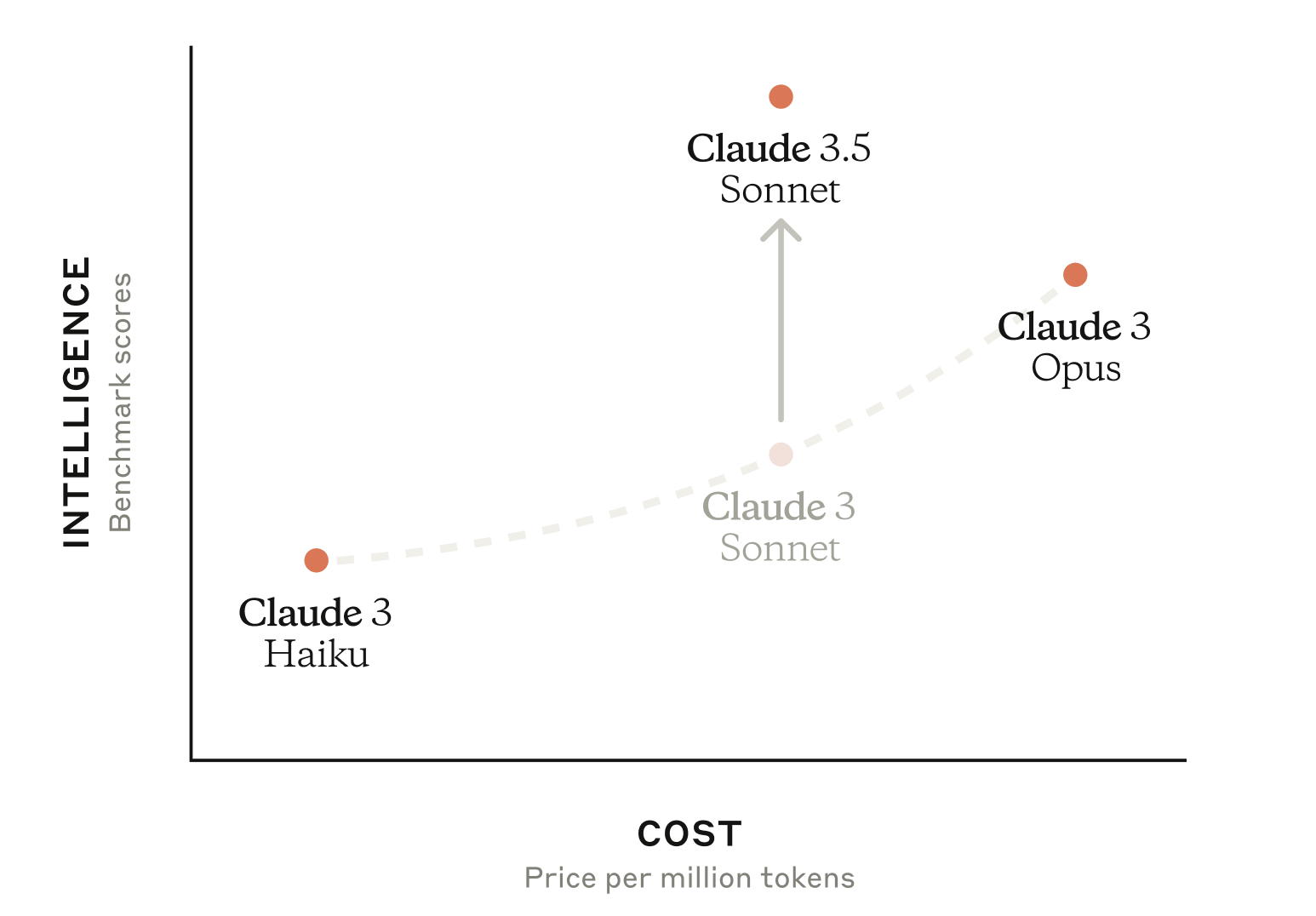

The model to use.

See anthropic docs for the latest list of all models. You can pass any model name you wish, we will not check if it exists.

The maximum number of tokens to generate. Default: 4069

For all other options, see the official anthropic API documentation.